Back to articles

Machine learning drives gesture control for resource-limited devices

As a cornerstone of artificial intelligence (AI), machine learning enables smart systems to perform increasingly complex cognitive tasks without requiring explicit instructions. One interesting application of modern machine learning is for high-accuracy gesture detection for portable devices.

This ability to control devices with gestures alone leads to smoother, safer, and more intuitive user experiences and has the potential to transform the way humans interact with different products.

Gesture control in action

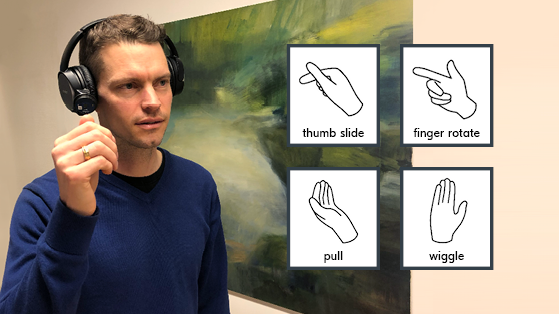

A real-world example of gesture control comes from Stockholm, Sweden-based software company, Imagimob. The company has collaborated with Acconeer, a radar technology company, to create an innovative platform for gesture control combining edge AI and radar in consumer electronic products and other embedded systems where size, cost, power consumption, and memory and processor resources are key constraints.

Imagimob’s gesture detection technology can be embedded into any hardware device. Initially, the two companies have produced a gesture-controlled headphone demonstration using Acconeer’s XM122 IoT Module, which collects raw radar data to pick up detailed information allowing for very fine gestures to be recognized. The module runs an Imagimob AI model trained to recognize five main hand gestures—covering the sensor, wiggling, pulling, sliding the thumb, and rotating a finger—with high precision and close to zero latency. These hand gestures control the operation of over-ear, on-ear, and even in-ear headphones. The AI model can also be retrained to recognize any other type of gesture or motion input.

Machine learning is used to filter out the relevant information from a mass of data allowing the device to focus exclusively on necessary responses. For example, a random hand gesture like scratching one’s head or the proximity of a flying insect will correctly be interpreted as irrelevant, with only the bona fide, bespoke gestures generating defined actions such as raising the volume on the headphones.

Imagimob Gesture Detection Library

The Imagimob Gesture Detection Library comprises an artificial neural network targeted to time series data. To overcome the challenges presented by large variations in data for each user, the AI library has been trained on gestures performed by several people. Extremely high requirements have been placed on the accuracy of the AI model itself to ensure this application works robustly.

Imagimob’s AI library is specifically designed with a small memory footprint of approximately 80 kB RAM and processes roughly 30 kB of data per second. The execution time of the AI model is roughly 70 ms, meaning that it predicts a gesture at approximately 14.3 Hz.

Nordic-powered wireless solution

To support gesture control, the XM122 IoT Module integrated into the headphone demonstration combines Nordic’s nRF52840 advanced multiprotocol SoC with Acconeer’s proprietary pulsed coherent radar, enabling millimeter-level radar distance ranging and presence detection. In operation, the Nordic SoC’s 32-bit, 64-MHz Arm Cortex M4 processor with floating-point unit (FPU) (supported by1 MB Flash and 256 kB RAM) is responsible for running Acconeer’s Radar System Software (RSS) firmware and the Imagimob Gesture Detection Library to register and process the collected radar data. The Nordic SoC-enabled Bluetooth LE connectivity then wirelessly sends just the meaningful information to the connected device.

Edge AI applications

Many applications will benefit from machine learning and Edge AI. As with the gesture-controlled headphones, various pretrained and retrained intelligent products will be able to collect data using sensors, analyze that data for relevant patterns, and send a signal only when action is needed. Such technology creates enormous opportunities for both OEMs and consumers alike.

Read more: Cortex-M: Machine Learning at the Edge

Imagimob has created Imagimob AI, a software suite for the development of Edge AI applications allowing OEMs—even those without deep AI knowledge—to leverage the power of AI to develop small, power-frugal, low-cost end devices with intelligence and data processing capabilities. Along with radar, this software suite can use data from a wide range of sensors, for example, any sensor input that produces time-series data, such as an accelerometer, gyroscope, temperature sensor, RPM (revolutions per minute) sensor, throttle position sensor (from an engine), and many more. Importantly, the software can be used by the developers themselves, and in turn, these end devices can provide actionable real-time insights.

When gesture control is part of a complete ecosystem comprising hardware, firmware, and associated apps, it delivers on its promise. Imagimob helps third-party developers create such intelligent products and services based on Edge AI software applications. In the process, the company is successfully deploying machine learning and its wide-ranging capabilities to drive the future of gesture control.